Uber and Self-Driving Cars

We need to embrace an impartial and unemotional risk assessment as the ways we allow technology to manage our lives. The question we should be asking is this: Are self-driven cars safer or less safe than human-driven vehicles?

You’ve probably heard by now about the self-driving Uber vehicle that killed someone.

READ: Uber self-driving car kills pedestrian in first fatal autonomous crash

This is a double tragedy. First, someone needlessly died. Second, the accident is likely to dampen public enthusiasm for a new technology that could ultimately save many lives.

Self-driving vehicles are long overdue given astounding advances in telecommunications, automation, and robotics. If we can operate sophisticated military weapons (drones) and drop deadly explosives on people on the opposite side of the world with nothing more than signals beamed from remote locations using satellites, it seems we should be able to harness a similar technology for something more humane.

Just as distressing is the widespread public misconception about safety and risk which often clouds good judgment. We don’t always think logically. In fact, we often overreact when we perceive danger (recall the infamous overreaches of the Patriot Act). In the wake of this traffic death, expect a new wave of opposition to self-driving cars and trucks. People are afraid.

What’s your first thought if you see a driverless car? Most of us are likely to gawk at the sight. We’re not accustomed yet to seeing an empty driver’s seat. It’s even a bit scary. High-tech stuff intimidates lots of people. We’re afraid — usually of things we can’t control and don’t understand.

Instead, let’s try and be reasonable. Let’s allow science to work for us. What we need is an impartial and unemotional approach to the ways we allow technology to manage our lives. The question we should be asking is this: Are self-driven cars safer or less safe than human-driven vehicles? This is the only answer that matters.

Yes, a pedestrian killed by a self-driven car is a terrible incident. Joint public-private inquiry and oversight absolutely must be implemented that will improve if, not guarantee, safeguards. But let’s not get carried away here. How many pedestrians would have been killed by all the self-driven vehicles currently engaged in a trial phase throughout the United States had they been driven by humans, instead?

Let’s acknowledge that accidents do happen. To err is human. Every time we get into a car, we risk the chance of dying. Moreover, walking on the street even entails some risk. It’s quite possible — even likely — that human drivers would have been responsible for more accidents had no self-driving cars being on the road. Certainly, once this technology improves to an acceptable level, automated vehicles will be much safer than those with human drivers.

Why do I believe this?

Admittedly, my knowledge of self-driving vehicles and the associated technologies is almost zero. Still, I’m willing to go on record with a few suppositions — that no self-driving vehicle is ever drunk, stoned on drugs, or will fall asleep at the wheel. No self-driving vehicle will ever be distracted by a text message or a passenger. No self-driving vehicle will ever instigate a case of road rage. Furthermore, no self-driving vehicle will speed, run a red light, or break traffic laws. In short, once this emerging technology improves, we will all be much safer.

There’s a valid comparison that supports the argument. Air travel is far safer now than years ago. This is mainly due to advances in technology similar to self-driving cars. Flying is safer now, even though there are far more planes in the air today than at any time in history; yet airline disasters have become exceedingly rare. This is especially true in the United States. It’s never been safer to fly on a commercial airline.

Boeing is currently testing airplanes that fly on their own. Unlike self-driving cars, which is a relatively new concept in the public consciousness, most commercial flying is already heavily automated. We aren’t being chauffeured from take-off to a landing point by a pilot. Most of the journey from gate to gate is planned and controlled by a computerized auto-pilot.

READ: Would You Fly on an Airliner Without a Pilot?

Of course, a human pilot is always in the cockpit for at least two reasons. First, human pilots instill confidence with fliers. This is why crew members for major airlines continue wearing outdated military-style uniforms, even though such antiquated customs serve no purpose. Second, a human pilot can always intervene just in case there’s an emergency. Passengers aren’t worried their lives are tinker-toyed to a tiny microchip making all the necessary in-flight adjustments. We’re comforted by the confidence a real pilot can seize the flight controls if something goes terribly wrong.

The implications of inevitable advances in high-tech, including self-driving cars, trucks, trains, and planes is a debate worth having. Millions of jobs will be at stake. Taxi drivers, truckers, train engineers, and pilots could soon become about as relevant as blacksmiths. Automation will continue to displace workers. That’s a big concern that will require an adult conversation.

However, let’s not hide our heads in the sand and pretend technologies that change our lives will go away — because they won’t. They’re here to stay. When tragedy occurs and technologies fail, as will happen, that’s not the time to retreat. It’s time to work harder to make things better.

READ MORE: Self-Driving Car Crash: Who is Liable?

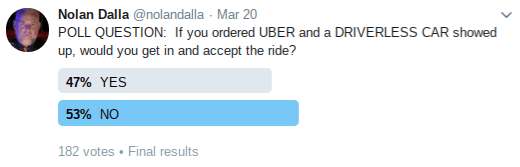

All this being said, I’ll leave you with a question: If you ordered Uber and a driverless car showed up, would you get in and accept the ride?

ADDENDUM: Here are results from my Twitter poll:

When I started my civilian career as an air traffic controller with the FAA in 1963 Washington was attempting to automate air traffic control. They are still trying and have wasted many many billions of taxpayer dollars without success. Even I could have told them that, as the old saying goes, ” Give me the ability to know what is possible “, etc. Drones -for the most part- self driving cars -for the most part- automobile super batteries -for the most part- Teslas Elon Musk -for the most part- and good old DC have obviously not been exposed to wisdom as yet.